Appwrite meets opentelemetry

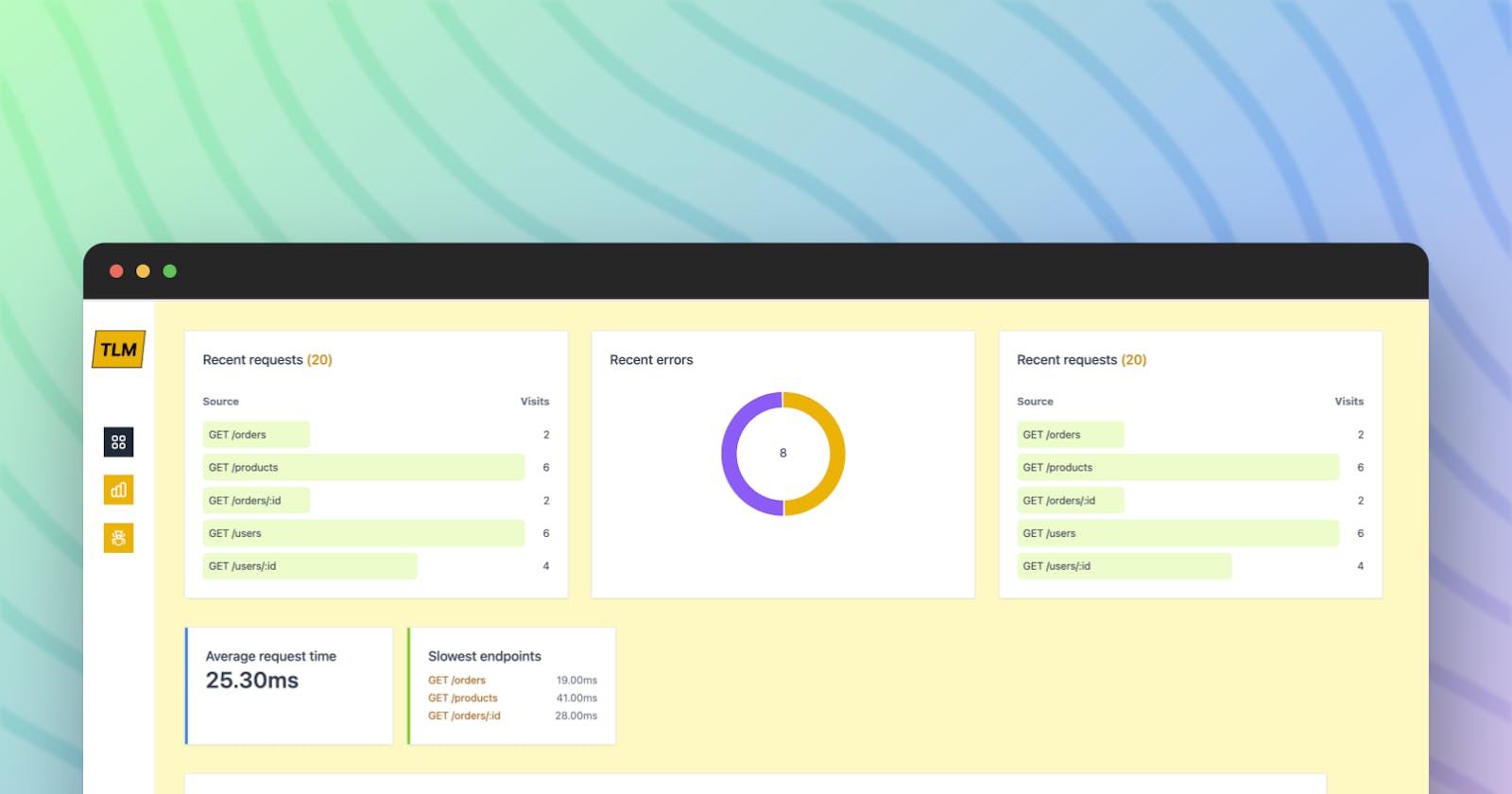

Built a minimalistic service and UI that utilizes appwrite to store and display opentelemetry data

Team:

- Akhil Gautam https://github.com/akhil-gautam

Project Description

This app enables Appwrite to be used as a collector of opentelemetry data and visualises it from a UI. It uses Appwrite's database and its attributes to store opentelemetry data in a format that is efficient to store and read.

Tech stack

Appwrite database(https://github.com/akhil-gautam/optl-micro-service/blob/4d615ed4760e49b775ff767342ac9667da9f20c5/package.json#L16)

Node.js/Express

React.js

TailwindCSS & Tremor

Demo

Deployed dashboard: https://appmetry.vercel.app/

Motivation

Recently I was learning about opentelemetry and it's use cases. As I was looking into exporters and collectors from my otlp(opentelemetry) data, there certainly were many options. Then I saw appwrite's hackathon and I went through their doc. It seemed to be pretty straightforward to use it from the node SDK.

How did it start?

The plan started with generating otlp data but integrating it into an app, storing it in appwrite via a microservice and then having a UI to read from appwrite(via the microservice).

Source app

I started with a simple express app with SQLite as the source of otlp data. The app generated some dummy data and added specific routes which may replicate the real-world scenario. Then came the integration of opentelemetry in the app. It was simple enough using the @opentelemetry/instrumentation-express package. Let's look at the configuration:

// instrumentation.js

const { HttpInstrumentation } = require('@opentelemetry/instrumentation-http');

const {

ExpressInstrumentation,

} = require('@opentelemetry/instrumentation-express');

const opentelemetry = require('@opentelemetry/api');

const { Resource } = require('@opentelemetry/resources');

const {

SemanticResourceAttributes,

} = require('@opentelemetry/semantic-conventions');

const { NodeTracerProvider } = require('@opentelemetry/sdk-trace-node');

const { registerInstrumentations } = require('@opentelemetry/instrumentation');

const {

BatchSpanProcessor,

} = require('@opentelemetry/sdk-trace-base');

const {

OTLPTraceExporter,

} = require('@opentelemetry/exporter-trace-otlp-http');

const collectorOptions = {

// url should be the URL of the optl-microservice

// that is: https://github.com/akhil-gautam/optl-micro-service

url: 'http://localhost:3001/traces',

concurrencyLimit: 10, // an optional limit on pending requests

};

registerInstrumentations({

instrumentations: [

new HttpInstrumentation(),

new ExpressInstrumentation(),

],

});

const resource = Resource.default().merge(

new Resource({

[SemanticResourceAttributes.SERVICE_NAME]: 'express-app-sqlite',

[SemanticResourceAttributes.SERVICE_VERSION]: '0.1.0',

})

);

const provider = new NodeTracerProvider({

resource: resource,

});

const exporter = new OTLPTraceExporter(collectorOptions);

const processor = new BatchSpanProcessor(exporter);

provider.addSpanProcessor(processor);

provider.register();

opentelemetry.trace.setGlobalTracerProvider(provider);

module.exports = {

provider,

};

This is the most basic configuration of the instrumentation.js, but the point to notice is collectorOptions where we have added the URL to the microservice which has a POST endpoint /traces that takes the otlp data, formats it and uploads it to the appwrite database.

In order for instrumentation.js to kick in, we added it to the start script so that whenever we boot our app, it will start instrumentation:

// package.json

"scripts": {

"start": "node --require ./instrumentation.js app.js",

},

That's all, and whenever the server is started, it starts auto instrumentation. The problem I saw was that there is no way it will catch errors and add them to traces. I spent a lot of time on the opentelemetry doc to see if there is any option that can automate that, but with no success, had to add custom middleware in the app.

// app.js

const opentelemetry = require('@opentelemetry/api');

.

.

.

// global error handler middleware

function errorHandler(err, req, res, next) {

const tracer = opentelemetry.trace.getTracer('errorTracer');

tracer.startActiveSpan('error', (span) => {

span.setAttribute('component', 'errorHandler');

span.setAttribute('errMsg', err.stack);

span.setAttribute('errorCode', 1);

span.setAttribute('route', `${req.method} ${req.route.path}`);

span.end();

});

res.status(500).json({ error: 'Internal Server Error' });

}

// use this middleware after all the routes

app.use(errorHandler);

app.listen(port, () => {

console.log(`Server is listening on port ${port}`);

});

Microservice to process otlp data

Now that we have a demo app in place, it was time to ingest the data received at /traces the endpoint and upload it in appwrite. For the microservice, created another express app with appwrite's node-sdk. My plan was to push the data as json in appwrite and keep it simple. Soon the plan was crushed when I found that appwrite doesn't support json columns. Even though I tried storing the stringified data in a string column on appwrite, it seemed infeasible because of obvious performance issues.

After spending a day thinking about it, I thought most of the data would not be needed anyway and if we can format the data and make it granular, we can simply utilize appwrite as a relational database.

^^These are the columns(attributes) I created on appwrite and then wrote a formatter which will only produce those columns from the otlp data:

// utils.js

function processSpans(formData) {

const spans = [];

for (const spanData of formData.resourceSpans) {

for (const span of spanData.scopeSpans) {

const { scope } = span;

for (const s of span.spans) {

const error_code = scope && scope.name === 'errorTracer' ? 1 : 0;

const error =

error_code === 1

? s.attributes?.find((el) => el.key === 'errMsg')?.value?.stringValue

: null;

const name = s.attributes?.find((el) => el.key === 'route')?.value

?.stringValue;

const {

attributes,

droppedAttributesCount,

events,

droppedEventsCount,

status,

links,

droppedLinksCount,

...rest

} = s;

spans.push({ ...rest, error_code, error, name: name || rest.name });

}

}

}

return spans;

}

module.exports = {

processSpans,

};

This formatter removes attributes, droppedAttributesCount, events, droppedEventsCount, status, links, droppedLinksCount keys from the otlp data. It also checks if it is an error then it finds the stacktrace from our custom span we added in the middleware of the source app and stores that as a string in the error column in the appwrite database. This wasn't as smooth as it looks because there was a lot of error in the formatter while parsing the otlp data but finally, it came out to be in a working condition.

UI to visualise the data

In order to visualise the data, I had to do two things:

build APIs in the microservice to fetch data

build a React app to show them

The UI app is built on React + vite-swc and the prominent packages that I used initially are:

TailwindCSS

Tremor.so as a component library

I quickly added API calls in useEffect (not ideal) and was able to get data. Just before I could declare my victory, saw that I am reaching rate limits while developing because on every code edit, it is re-rendering and making API calls 😢

React-query to the rescue 🎉

I had previously used react-query for caching but this time it was needed for development as well. Configured react-query with a stale time of 10 mins( changed it to 2 mins at the end) initially and then started building the UI again. It was really a lifesaver.

Appwrite

Appwrite certainly is a great tool for using it as a database. It is easier than Atlas when it comes to getting started. I was able to figure out most of the stuff from the UI itself but some went through the documentation. Whenever I found any error related to appwrite, I was able to get solutions in either the doc or GitHub issues.

But there is one area in documentation which may need more polishing and that is Queries. It does list examples but those didn't work in the node-sdk and that's why the app that I have built is still not in an ideal state.

Query.select, Query.isNull & Query.isNotNull isn't working in the node-appwrite.

Except for those, it has been a smooth experience with appwrite and would be using it more of it like the storage and functions.

Project repos:

Demo app: https://github.com/akhil-gautam/demo-app-optl

Microservice: https://github.com/akhil-gautam/optl-micro-service

UI app: https://github.com/akhil-gautam/telemetry-ui

The apps are not deployed anywhere to cost issues, nothing is free nowadays 😂

Suggestions and contributions are welcomed. Please let me know your thoughts about this application in the comments.

I thank Appwrite and Hashnode for this Hackathon.

#Appwrite #AppwriteHackathon